LLMs, Large Learning Models, or so-called AI, remains a probabilistic algorithm, one which is physically able to process multiple connections through the use of neural type chips. By processing many more datapoints, it is thereby capable of refining likelihoods providing more accurate results. LLMs remain linear.They move step by step with each step weaving a denser web of mathematical probables, thereby narrowing outcomes. This step-by-step approach makes them linear.

Because they are linear, LLMs can only deal with the complicated. They cannot deal with the complex. Complexity deals with contradictory processes that diverge, often negating each other. Here we have the old giving rise to the new, or where the emerging new is not yet fully formed and therefore is seen as the least probable outcome. LLMs deal with the actual or existing old, but they cannot deal with the potentially new. Complexity is therefore dynamic, changing, evolving, presenting different outcomes, making it improbable, non-linear.

But complexity is the way the world works. If the world was merely complicated, it would remain relatively unaltered, change would take place within fixed boundaries and would soon be exhausted. But the world does change often violently and unpredictably.

The brain is able to conquer complexity because it is analogue not digital. Our brain is directly connected to the outside world through our senses. Digitising means mimicking the world. Digitising the world will always be a wall between reality and absorbing it, despite it becoming increasingly porous as algorithms advance making it more proximate, but it remains a wall, nonetheless.

When the hyperscalers hype AGI or artificial general intelligence, they claim it will mimic the human brain. Currently, LLMs have to be trained. They require human intervention to guide them into making the correct choices. They are labour intensive. What the hope rather than hype about AGI turns out to be is that LLMs will be able to dispense with human training because they have been trained on sufficient information, trillions of data points, therefore making themselves self-replicating.

That will still not make them clever, because quantity in this case does not turn into quality. It will not generate self-learning theory. The theory which is needed because the appearance of a thing does not coincide with its inner essence as appearance is always modified by its interactions with the external and often localised world which helps modify (confuse) its final but not static appearance. This constant interplay between essence and presence requires theory, which is not self-evident, because each unique phenomena expresses distinct but yet undisclosed or described interactions, therefore the need for a specific theory applicable only to that phenomenon. This is why human intelligence is needed.

But even in the realm of the probable, LLMs finds their limitations. Most companies who have adopted them have settled for the cost effective adequate, rather than for the satisfactory. They can fulfil tasks, but not to the level of the current expertise exercised by the workers they replace because they lack the final subtleties that only localised human experience provides.

The limitation of so-called AI needs to be understood in order to estimate how much of it is being oversold by those thirsting for funding capital. Yes, they can accomplish more complicated tasks then traditional algorithms. Yes, their benefit will be found in downstream corporations, that is in the manufacturing, distributing and services sectors. Yes, they will be labour saving in time. Yes, they will allow cost cutting, rather than revenue generating, which will still add to profits.

But in the upstream corporations, the hyperscalers, the likes of Meta, Amazon, Alphabet or Microsoft, there is an emerging crisis of overinvestment. According to comments carried by CNBC, what is taking place is the greatest peacetime sectorial investment in the history of capitalism. Throughout the history of capitalism, all new technologies attract more investment than the industry could profitably support. One instance of this was the Railway Mania which broke out in the mid-1850s and whose resolution produced a general economic crisis. Another example was the dot.com bubble. Today’s behemoth is the AI bubble.

In one sense this overinvestment is different, not simply because of its sheer scale, but because of its lopsidedness. Due to Taiwanese manufacturing prowess and capacity, chips are available. But there is very little else available. It’s like having car engines but not the car bodies to place them in. This is primarily due to a hollowed-out US industrial base and limited power supplies. Simply said, the US does not have the infrastructure to support the hyperscaler centres. The result is that 30-50% of these centres are being delayed or scrapped due to power grid failures and critical equipment backlogs which stretch from three to five years and are often dependent on imports such as transformers from China.

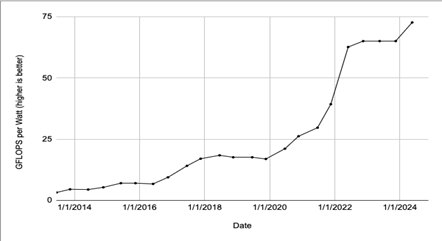

This mismatch could be ruinous. Nvidia, the main supplier of chip hardware, insists on purchase up front. But those chips are often warehoused waiting for data centres to be built and hooked up to reliable power supplies. By then these chips could be obsolete given Nvidia’s rapid product cycle. For example, Nvidia’s Blackwell series of chips are 25 times more energy efficient than their previous H100 series as this interesting graph from Nvidia below shows. Given electricity bottlenecks and prices, this is the difference between operating a profitable or a loss-making data centre.

But it is not only concerns about insufficient building materials, skilled labour shortages and power bottlenecks which dominates, there is also concerns about insuring the data centres. These projects run into billions and are riddled with unquantifiable risks, delays in construction, unreliable electricity supplies and the rapid depreciation of chips which makes valuations problematic.

Then there is the threat of open source competition. These models are less computing intensive and by using their access to the internet avoid the need to hyperscale. This is already happening in China. They also pose a threat to subscriptions. Currently all the hyperscalers are subsidising subscriptions often to the tune of $12 for every $1 earned in subscriptions to keep their subscriptions affordable while they build scale. This is affordable for the likes of Microsoft and Alphabet, but not for the likes of OpenAI, which is why it has resorted to taking advertising having said it would never do that.

Circular funding continues where companies invest in each other to secure sales while boosting their valuations through duping Wall Street. This is still working, but only just, as Taiwan Semiconductor’s recent results highlight, where revenue growth is sharply lower in the final quarter of 2025 at an average 3.5% compared to 42% in the first quarter. And of course, as much of the new investment is debt fuelled not cash flow driven, there is pressure to execute major layoffs which already total nearly 100,000 in 2026 to preserve cash flow for investment purposes.

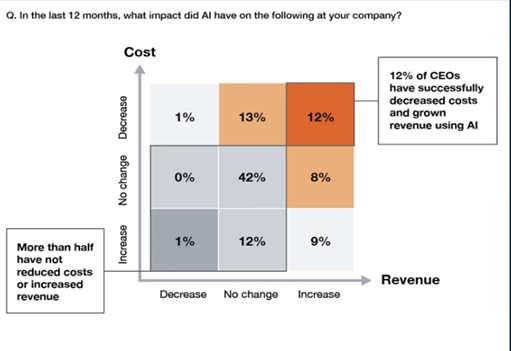

Adoption remains patchy. 2025 was supposed to be the inflection year, the year when adoption generalised. It has not happened as this podcast, AI Isn’t Delivering (And CEOs Know It) reveals. Only 12% of firms have decreased costs while boosting revenue. This is industry wide. The balance between cost saving and cost increases is evenly balanced. We are still in the realm where herd mentality prevails over accounting cautions, where potential eclipses actual while the potential itself is more limited making it more finite than expected.

Conclusion

In summary, AI is still caught up in no man’s land while chocking on debt. That there is potential is not in doubt, but managing and exploiting this potential is something else. Capitalism has not yet achieved either, what it has managed instead is to throw enormous sums of money at it and in doing so has created an economic dependency on this industry, which should it fail, will have economy-wide financial consequences. This can be seen in the latest US GDP figures where much of its growth was dependent on AI spending. So too the stock market. The Financial Times on 8 May 2026 reported how the 12% rise in share prices on Wall Street in April was limited to just 42 AI and chip corporations, calling this rise “fragile” and unprecedented.

But not only that, should the ardour cool and should stock prices tumble, then as I pointed out it in my earlier article on inequality, it will collapse spending by the top 10% potentially turning recession into slump.

AI is mis-sold, overestimated and over invested. The probability that it will retrench at some point is high. This is not inference, but knowledge born from dialectical experience. The question is when, not if. But the answer is not yet, because the current earnings reports from the hyperscalers and chip manufacturers covering Q1 2026 show continued robust investment and demand going forward. This explains why the financial fallout from the war in Iran and the blocking of the Straits of Hormuz has been so subdued so far.